|

But when I used a learning rate of 0.02 with swish(), I got essentially the same results. Compared to the NN with tanh() and a learning rate of 0.01, the swish() version learned a bit slower. I took an existing 6-(10-10)-3 classifier I had, which used tanh() on the two hidden layers, and replaced tanh() with swish(). The demo run on the right uses swish() activation with a LR = 0.02. The demo run on the left uses tanh() activation with a LR = 0.01. So, adding what are essentially unnecessary functios to PyTorch can have a minor upside. But if swish() had been in PyTorch I would have discovered it earlier. Adding such a trivial function just bloats a large library even further. The fact that PyTorch doesn’t have a built-in swish() function is interesting. Update: I just discovered that PyTorch 1.7 does have a built-in swish() function. Z = self.oupt(z) # no softmax for multi-class .jpg)

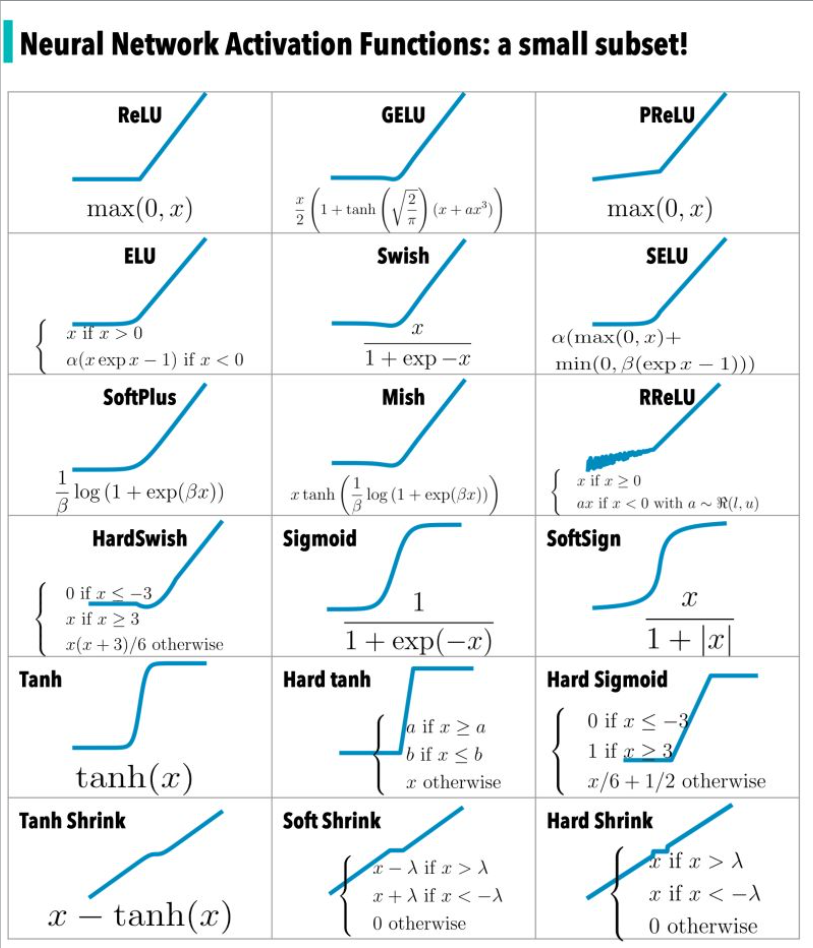

# z = T.tanh(self.hid1(x)) # replace tanh() w/ swish() However, it’s trivial to implement inside a PyTorch neural network class, for example: At the time I’m writing this bog post, Keras and TensorFlow have a built-in swish() function (released about 10 weeks ago), but the PyTorch library does not have a swish() function. The Wikipedia entry on swish() points out that swish() is sometimes called sil() or silu() which stands for sigmoid-weighted linear unit. The three related activation functions are: It’s sort of a cross between logistic sigmoid() and relu(). I made this graph of sigmoid(), swish(), and relu() using Excel. The swish() function was devised in 2017. Many variations of relu() followed but none were consistently better so relu() has been used as a de facto default since about 2015. Then relu() was found to work better for deep neural networks. In the early days of NNs, logistic sigmoid() was the most common activation function. I don’t know Thorsten personally, but he seems like a very bright and creative guy. My thanks to fellow ML enthusiast Thorsten Kleppe for pointing swish() out to me when he mentioned the similarity between swish() and gelu() in a Comment to an earlier post. I was recently alerted to the new swish() activation function for neural networks.

The simplicity of Swish and its similarity to ReLU make it easy for practitioners to replace ReLUs with Swish units in any neural network.It’s very difficult, but fun, to keep up with all the new ideas in machine learning. For example, simply replacing ReLUs with Swish units improves top-1 classification accuracy on ImageNet by 0.9\% for Mobile NASNet-A and 0.6\% for Inception-ResNet-v2. Our experiments show that the best discovered activation function, $f(x) = x \cdot \text(\beta x)$, which we name Swish, tends to work better than ReLU on deeper models across a number of challenging datasets. We verify the effectiveness of the searches by conducting an empirical evaluation with the best discovered activation function. Using a combination of exhaustive and reinforcement learning-based search, we discover multiple novel activation functions. In this work, we propose to leverage automatic search techniques to discover new activation functions. Although various hand-designed alternatives to ReLU have been proposed, none have managed to replace it due to inconsistent gains. Currently, the most successful and widely-used activation function is the Rectified Linear Unit (ReLU). Download a PDF of the paper titled Searching for Activation Functions, by Prajit Ramachandran and 2 other authors Download PDF Abstract:The choice of activation functions in deep networks has a significant effect on the training dynamics and task performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed